Recently tested the use of Certutil to download a file and look for the artefacts. I didn’t find much in the DFIR realm about what this might look like on a system so thought best to post it up!

Certutil is a super useful program that does a lot of things. You can use it to encode or decode files, hash them, and download them from the Internet (among a lot more). More information on it can be found here and under the LOLBAS project too!

Certutil keeps a cache stored in a few of the locations (possibly more, but this is what I found).

- \Windows\System32\config\systemprofile\AppData\LocalLow\Microsoft\CryptnetUrlCache\Metadata

- .\Windows\SysWOW64\config\systemprofile\AppData\LocalLow\Microsoft\CryptnetUrlCache\Content

- \Users\%userprofile%\AppData\LocalLow\Microsoft\CryptnetUrlCache

From my reading, the former will be used “if you run IIS with a local user identity that has administrator abilities”. When I tested I was running under the local user, so the artefacts showed up within my user profile.

I opened this folder and deleted everything from the “Content” and “Metadata” subdirectories. More on that later.

To download a file I ran the following command:

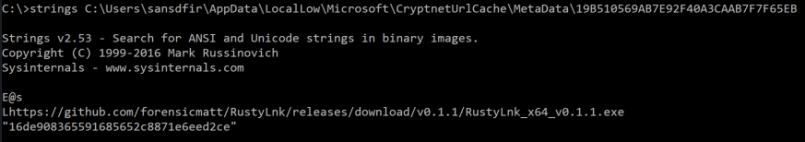

certutil.exe -urlcache -split -f "https://github.com/forensicmatt/RustyLnk/releases/download/v0.1.1/RustyLnk_x64_v0.1.1.exe"

(HI MATT!)

Oddly enough, I was located in C:\ at the time and it downloaded the file onto my desktop.

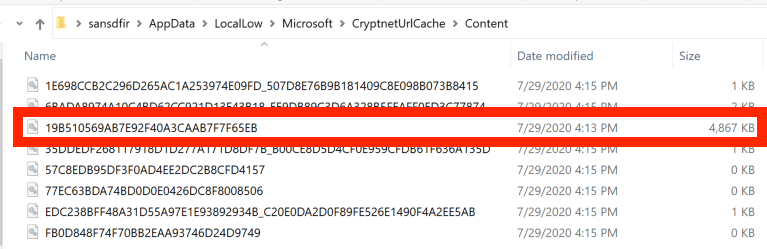

This had the effect of populating my Content and Metadata directories with, surprise surprise, content and metadata.

The EXE that I downloaded stands out pretty quickly because it’s significantly larger than the other files.

If you take the filename, and look for the corresponding file in the Metadata folder we can see where it came from, as well as the files MD5 hash. I haven’t looked for a breakdown of the file format that the Metadata files use, if someone has it let me know and I’ll update the post.

If I re-downloaded the same file, I noticed that the same file within Content was just updated (last modified changed to the time I downloaded the file) – A new file wasn’t downloaded. This makes sense so that the content is just updated and the folder doesn’t fill up with duplicates. I’m not entirely sure of how the naming convention works; it looks like an MD5 hash but it doesn’t match the MD5 of the file itself (so could have additional data).

I also don’t know how long the file will remain here before it’s cleared out; based on a recent case I don’t think very quickly.

Overall, something worth adding to your review process is:

- File content should be reviewed in some fashion – large files, exes, scripts; probably shouldn’t be in here without an explanation.

- File signature verification

- Virus scan

- VirusTotal/OSINT lookups

- Malware analysis

- Extract all the URLs from the metadata folder and build a list of accepted URLs, obviously anything from a random IP should be investigated further.

- Bstrings -q -d works well here to highlight suspicious URLs.

Edit: 2020-08-01

Matt Green made an excellent point

So it may be worth parsing Internet cache and seeing whether there are hits there too!

If someone tests this on Win10+ please let me know and I’ll update the post.

Edit: 2020-09-13

AbdulRhman Alfaifi has done some great research since I wrote this post. Check it out here

[…] https://thinkdfir.com/2020/07/30/certutil-download-artefacts […]

LikeLike

[…] ThinkDFIR Certutil download artefacts […]

LikeLike

[…] https://thinkdfir.com/2020/07/30/certutil-download-artefacts […]

LikeLike